AI phobia is out, AI literacy is in

The case for responsible AI use in the classroom

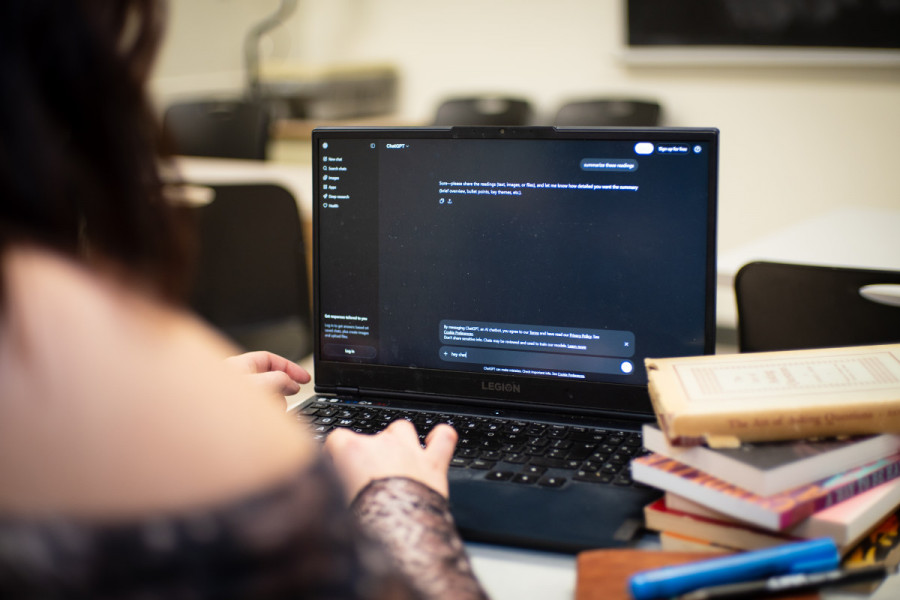

Artificial intelligence is already part of student life, and pretending it doesn't exist is unrealistic. The real question isn’t whether students will use AI. It’s how.

When used transparently and within clear rules, AI is not a shortcut to cheating but a tool that can make learning more accessible, creative and efficient.

AI can act as a digital assistant: helping organize ideas, clarify complex concepts, fix grammar, debug code or provide feedback on drafts. This doesn’t replace learning, it supports it. A student who uses AI to help restructure a paragraph or check their logic still has to understand the content.

Critics argue that AI weakens critical thinking and encourages dependency. That risk is real, but it comes from misuse. If institutions clearly define what is allowed and what is not, students can use AI without compromising their learning or crossing ethical lines.

For example, many universities now allow AI use for brainstorming or editing drafts, but consider submitting AI-generated work as your own to be academic misconduct.

At institutions like the Massachusetts Institute of Technology, students are encouraged to experiment with AI tools if they disclose how they were used and remain responsible for verifying the information. At Stanford University, AI assistance is equated to receiving help from another person.

AI can also lower barriers for students who already face disadvantages. Non-native speakers can use it to improve clarity without losing their voice.

Students with learning disabilities can break down dense readings into manageable summaries. In these cases, AI functions like assistive technology, similar to spellcheckers or calculators.

Tools that increase access to education should not be treated automatically as threats.

Banning AI outright ignores how education has always adapted to new tools. We didn’t ban search engines because students might plagiarize; we taught them how to research properly. We didn’t ban word processors because they made writing easier; we focused on ideas instead of handwriting.

In that sense, AI is not a trap by default. It becomes one only when students are never taught how to use it critically.

And using AI critically means to remain aware of AI limitations and its dangers, to understand how to use emerging technology critically despite its inherent biases.

Termed "AI literacy," this philosophy is encouraged by initiatives like the Centre for Artificial Intelligence Ethics, Literacy and Integrity at the University of Calgary.

In its own way, AI is a microscope that exposes the long-standing, damaging power structures inextricably tied to the digital, whose frameworks are the source of great injustice. Teaching responsible AI use is instrumental to uncovering and combating these structures in the classroom and beyond.

This article originally appeared in Volume 46, Issue 11, published March 17, 2026.

_(1)_600_375_s_c1.png)